Are Google AI Overviews Accurate? | SEO Video Insights

Accuracy in AI Overviews: How Do We Know it's True?

(This article originally appeared on my LinkedIn feed, but I'm also sharing it here)

One paper, released recently by Microsoft Research, (https://arxiv.org/html/2604.15597v1) showed a series of experiments that suggest LLMs (Large Language Models, which is a more accurate term for what we popularly know as "AI") introduce degradation into documents.

That is, if you create a document that has no errors, give it to an LLM and tell the LLM to do something with the document, your document is likely to return with one or more errors in that document. The more stuff you ask the LLM to do, the more errors you're likely to get.

Here's Gemini's summary of that paper (I use asterisks to designate machine-speak):

*******The authors conclude that current LLMs are unreliable delegates. While they are helpful for short tasks, they cannot yet be trusted to manage documents independently over long periods without close human supervision. The study serves as a warning that a model's success in one domain (like coding) does not guarantee its reliability in others.

___________________

"Close human supervision" is an important qualifier in that paragraph.

What does "Corruption" Mean for Google's AI Overviews?

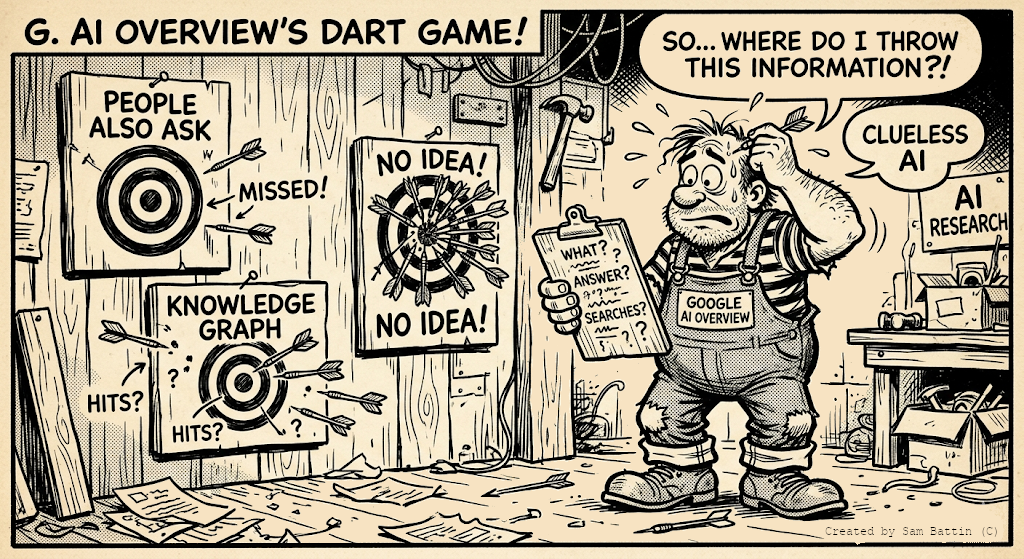

Because I work in the digital marketing space, I developed the following question: LLMs review data from web pages and synthesize complete answers in Google AI Overviews in response to user queries.

Do the conclusions of this paper tell us anything important about the accuracy of AI overviews?

Gemini said this:

******The study found that models don't usually fail through small, obvious typos. Instead, they produce "sparse but severe errors." This is particularly dangerous for Search users:

*******An AI Overview might look perfectly coherent and professional—what the paper calls the "agent paradox"—while silently dropping a "not" from a sentence or swapping a numerical value.

*******The paper calls this "corruption" rather than just "deletion," meaning the model often presents incorrect information as if it were part of the original source.

____________________________

How Can Companies Protect Themselves from Wrong Information Appearing in AI Overviews?

Obviously, one paper does not wreck an entire industry, and AI Overviews aren't going to disappear tomorrow. To be clear, Google does involve close human supervision in its search results, and they are working to minimize errors in AI Overviews (such as by not citing articles from The Onion).

As GEO experts, we can advise our clients on how to get reliably cited in AI Overviews.

Perhaps as importantly, we must also advise on methods to address potential corruption in AI Overviews. This is especially important for high stakes information like dosage instructions or pricing.